Welcome to The Prompter, a newsletter about prompt engineering created by krea.ai.

TL;DR

You can use images as prompts for DALL·E.

“Megalophobia” is effective at making images with large-scale objects.

Stable diffusion is about to be released.

Thoughts on how larval models text-to-image models are.

Experiment: Visual Prompts

It turns out that DALL·E can also be conditioned with visual elements.

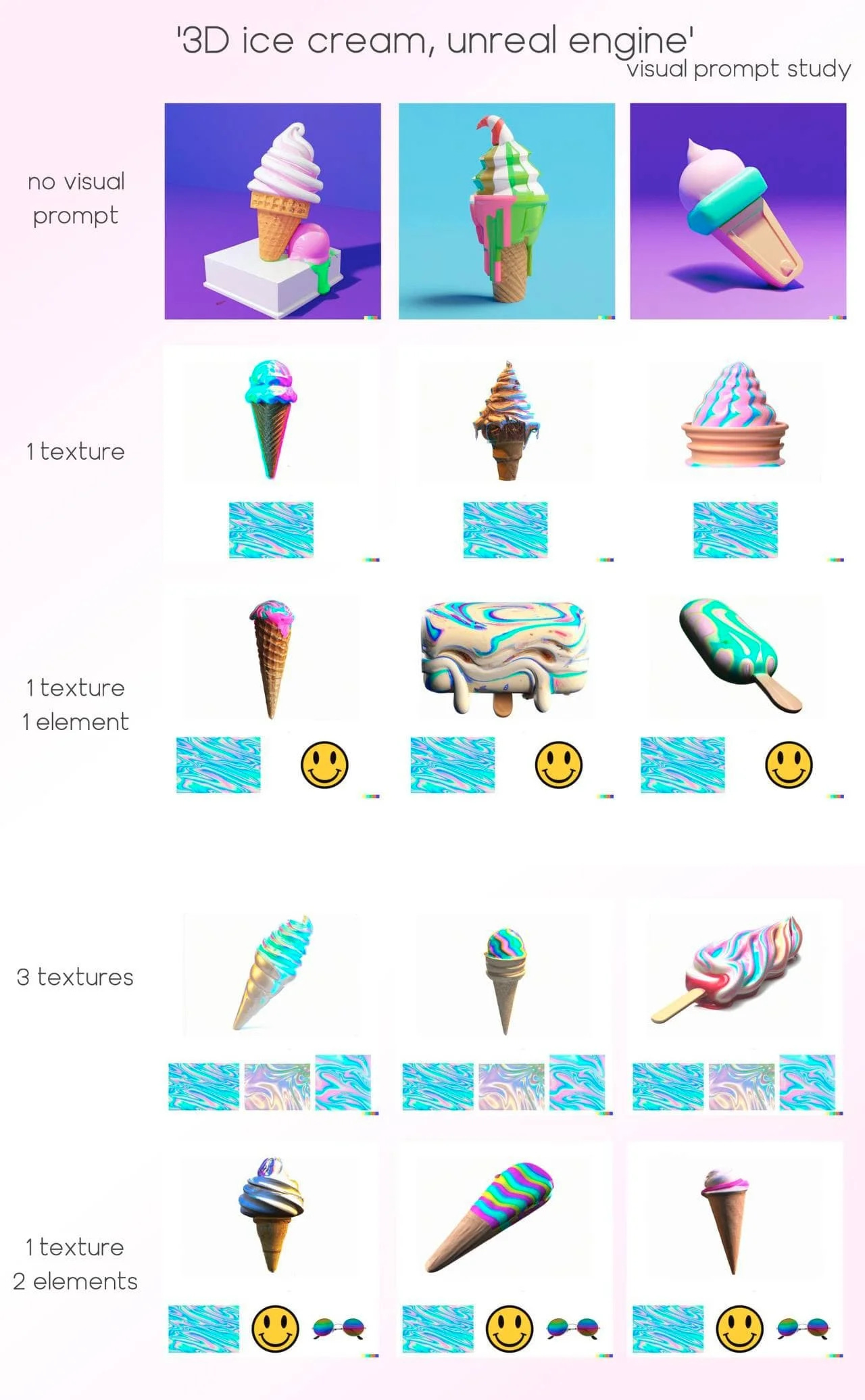

The ice cream in the following image was generated from the text “3D ice cream, unreal engine” without explicitly specifying any texture:

Yet, DALL·E took the style and shapes from the three images at the bottom and applied them to the ice cream.

These small images are what we call visual prompts, and they open the door to new creative ways of combining images with text in our generations.

The process for getting these results was simple. First, we took a blank squared image and we added the texture images that you can see at the bottom of the previous image. Note that these textures were not generated by DALL·E, we added them with a regular image editor. Then, we uploaded the image into DALL·E, and with the “Edit” mode we draw a squared region on top of these textures. Finally, we ran a generation with the text “3D ice cream, unreal engine”.

We're still figuring out the best ways to engineer visual prompts. The following is a set of experiments where you can see the differences depending on the visual prompts we used. For all the experiments, we cherry-picked the best 3 samples out of 2 DALL·E runs and we always used the same text prompt: “3D ice cream, unreal engine”.

This experiment shows how prompt engineering can go far beyond text prompts. There’s so much yet to be discovered!

Hot Prompts

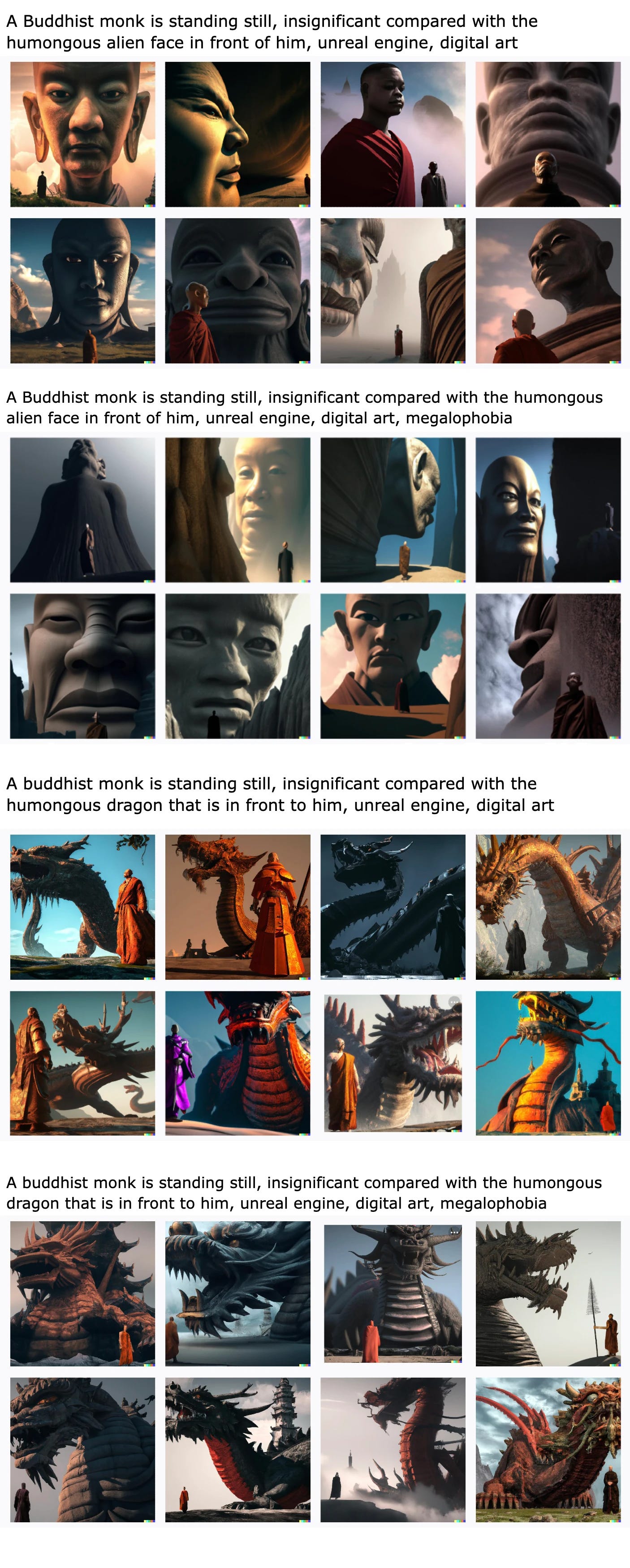

“Megalophobia”, by ButtCrackers#5126 in the OpenAI DALL·E 2 discord

This word is really effective for making images that need to have a small and a large-scale object at the same time.

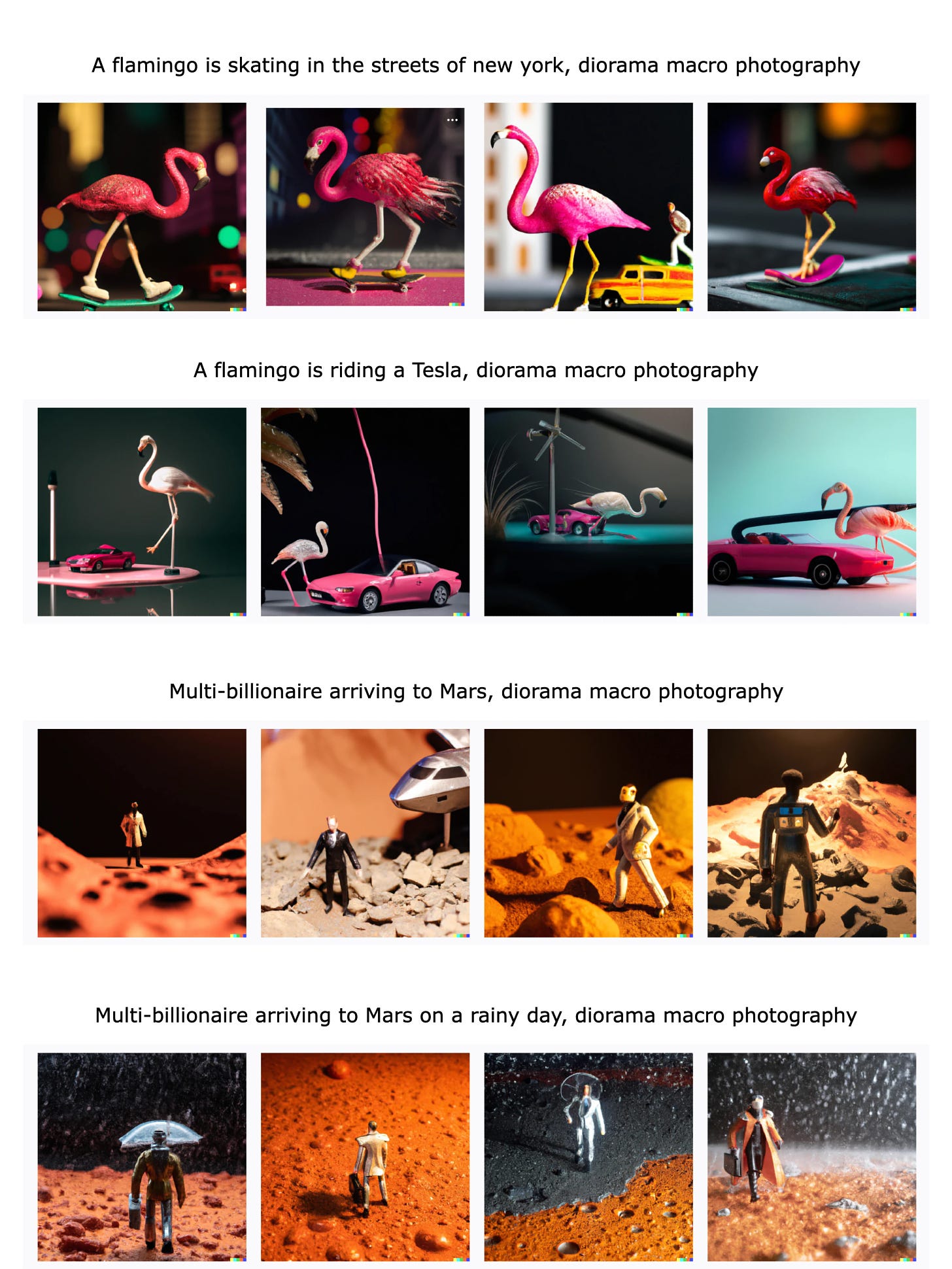

“Diorama macro photography”, by u/not_named_dan/ in the r/dalle2 subreddit

A diorama is a model representing a scene with three-dimensional figures, either in miniature or as a large-scale museum exhibit.

This type of photography was already referenced in the prompt book from dallery.gallery, check it out for more resources!

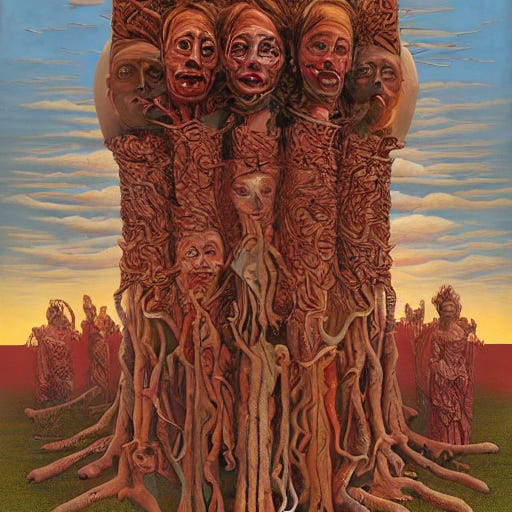

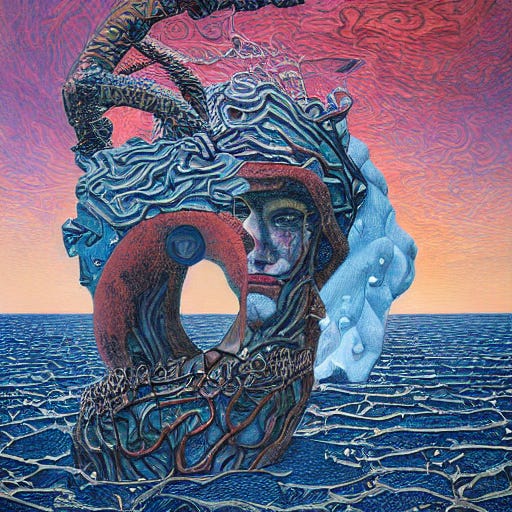

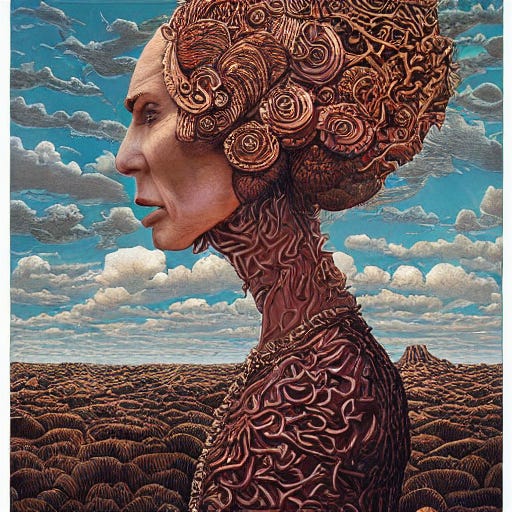

Deliriously happy + Otto Dix + Unreal Engine, by u/grim_bey in the r/dalle2 subreddit

Combining the style of a painter with a 3D renderer is an effective way to get nice 3D looks. The combination of “deliriously happy” with the style of “Otto Dix” already resulted in horrifically awesome results. Adding “unreal engine” to the prompt results in the following kinds of monsters:

AI Art

Jon Finger created a movie trailer just using AI. Such a creative use of AI tools, bravo!

New Version of Pixel-Art-Diffusion, from KaliYuga

New pixel art model, by Mario Klingemann

Midjourney V3 algorithms released

The results have fewer artifacts and two new prompt arguments are available: “stylize” and “quality”.

AI news

Stable diffusion is about to be released

This model consists of a larger and modified version of Latent Diffusion. These models are known for their efficiency when generating images, we can expect that it will be faster and cheaper than DALL·E—around 2.8s per run and 1/3 the cost.

This is going to be huge in the world of AI Art. Having access to the implementations of the models will enable AI Artists to use them in very creative ways. People will create their own versions specialized in different domains, such as concept art or logos.

The company behind the development of stale diffusion is stability.ai, who is also funding several AI communities such as EleutherAI or Laion.

Here are some examples of results produced by stable diffusion.

You can check out more examples in this unofficial subreddit (s/o to @djbaskin_images for sharing it!).

CogVideo is out

The authors of CogVideo made their model available. Here you can see some results and a tutorial we made to run it in an affordable way on Lambda Labs.

New Pre-trained Latent Diffusion Model

In particular, they released a pre-trained model using their retrieval-augmented diffusion models (RDMs) technique. This technique enhances the generation of images without the need of creating larger models.

Their idea is fairly simple, although it requires a bit of knowledge about diffusion models.

There are two types of diffusion models: unconditional and conditional. Unconditional diffusion models are trained to generate images from random noise—an image with random pixel values. Conditional models do not only learn to turn noise into a realistic image, but they also make sure that the image is coherent with a certain condition. This condition can be, for example, text. DALL·E is an example of a conditional diffusion model, since each image not only needs to look real but it also needs to represent the elements in the text.

In this new approach, they use a conditional diffusion model, but they do not condition it using the text or label from a particular image. Instead, for each image, they find a set of similar images that look similar, they compute the CLIP embeddings for each one, and they use the resulting encodings as the condition.

Once trained, thanks to CLIP being trained on images and texts, the resulting model can be conditioned with both, text prompts and images, and it can also work without any condition. The approach reminds a bit to unCLIP, due to their idea of leveraging the CLIP latent space to condition diffusion models.

Thoughts

Text-to-image models are still in their larval state. A year from now, we will laugh at what we call “hyper-realistic” today.

With models like DALL·E 2, we’re seeing equivalent results to the ones GPT-2 produced for text generation.

Although the results of GPT-2 were pretty convincing, the model tended to produce gibberish text that lacked a real understanding of the content it produced; the words were correct and well-placed one after the other but lacked any meaning.

Analogously, DALL·E 2 tends to produce convincing images. Yet, out of every set of generations, there are normally just a few results (if any) that grasp the purpose of the input text; they lack a deep understanding of the text-image relationship.

Making 100 or 500 times bigger GPT-2 models (like GPT-3 or PaLM) resulted in the solution to their lack of language understanding. These models tend to respond with ideas that make sense given their context, almost as a human would do.

When text-to-image models gain a human-like understanding of the relationship between a text prompt and its visual representation, we will be able to create any image we can imagine.

Once this happens, one of the most important skills for visual artists or content creators will be their capability for communicating effectively with AI models. Each model will “talk” a different language and will accept different kinds of prompts.

A good chunk of the future visual content that we produce will be created by AI—or at least AI will be a key component of its creative process. Plus, the amount of content that we will create will grow exponentially because of this technology.

We might write about the previous two ideas next week :) To finish, we want to make a quick last reflection about prompt engineering.

Prompt engineering is still a vaguely defined concept in our opinion. It is not limited to text and it will extend from image generation to video, audio, and 3D.

Here’s a fun exercise, we tried to create a dictionary description of prompt engineer:

Prompt engineer: a person who is skilled at feeding AI with text and/or other conditioners (i.e., prompts) to generate content on a single or multiple art mediums at a time.

Visit krea.ai, we’re building tools for prompt engineers <3